The Universal Baseball Association: Multisets, stars and bars, and the negative binomial distribution

Some 20th-century literature, some combinatorics

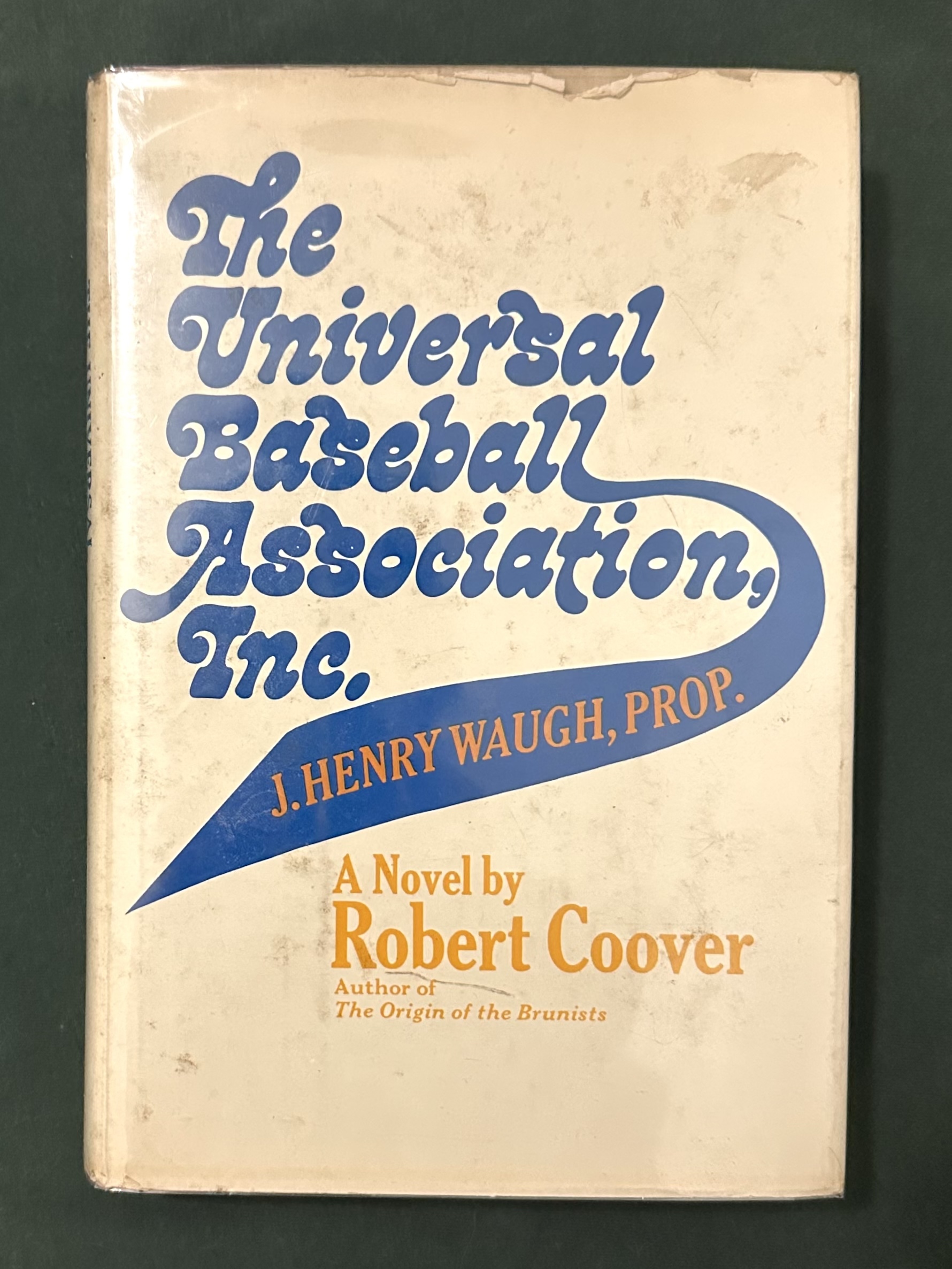

In Robert Coover’s 1968 novel The Universal Baseball Association, Inc., J. Henry Waugh, Prop., the protagonist invents a fantasy baseball league whose outcomes hinge on the repeated roll of three dice, the main randomizer of his simulated world. When Coover explains the scheme for the first time, he declares these combinatorics:

Henry had spent the better part of two months just working with the problem of odds and equilibrium points in an effort to approximate that complexity. Two dice had not done it. He’d tried three, each a different color, and the 216 combinations had provided the complexity, all right, but he’d nearly gone blind trying to sort the colors on each throw. Finally, he’d compromised, keeping the three dice, but all white, reducing the total number of combinations to 56.1

On this core randomization, Henry grafts additional branches of fanciful logic. For instance, rolling a $\{1, 1, 1\}$ or a $\{6, 6, 6\}$ twice in a row will trigger a lookup from the “Chart of Extraordinary Occurrences,” where he further maps a dice roll to outlandish events like fistfights or even death by beanball or line drive.

The 216 figure is straightforward: that’s just 6 faces, cubed. But how about the 56? In reviewing this fairly straightforward problem, I stumbled across the “stars and bars” counting method, which I remember reading about on Stack Overflow a long time ago. It turns out that stars and bars, multisets, and the negative binomial distribution are three different ways of looking at the same counting problem. So I wanted to record that relationship in this little post.

Multisets

For starters, note that, in Henry’s simulation, we are not talking about the sum of the dice. It is also not as easy as dividing out some repetitions from the 216 total.

The concept, where rolling a $\{1, 2, 1\}$ is equivalent to rolling a $\{1, 1, 2\}$, is exactly the idea of a multiset: order does not matter, just as in a regular set, but elements can be repeated. Because elements have associated multiplicities, this is not quite the standard permutation or the standard combination that we’re dealing with.

So how many multisets are there of cardinality k, with n possible values? The formula is

[ \binom{n + k - 1}{k} ]

In Henry’s case, $k = 3$ (cardinality of 3 dice in a roll) and $n = 6$ (possible values). How can we derive this?

Stars and bars

This counting method gets its name from representing the problem graphically in the following way. Let’s say that Henry rolls a $\{5, 3, 3\}$. Imagine 6 bins for the 6 dice values, separated by 5 bars:

__|__|__|__|__|__

In this representation, $\{5, 3, 3\}$ would look like

__|__|**|__|* |__

where 3’s multiplicity of 2 is represented by 2 stars in the appropriate bin. Now simplifying things by omitting the notation of the empty bins entirely, we get this very schematic representation of

||**||*|

Overall, the “stars and bars” graphic reduces to this: we have $6 - 1 = 5$ dividing bars and 3 stars, for a total of 8 positions. Note that 8 is one less than $n + k$. And we want count the different ways to arrange the 3 stars in those 8 positions. This is 8 choose 3.

Thus, we have $(n + k - 1)$ positions—stars and bars in total—and we want combinations of that, taken $k$ at a time. Now we can finally use the standard combinations formula, with the stars-and-bars representation in mind.

[ \binom{n + k - 1}{k} = \binom{8}{3} = \frac{8!}{3!5!} = 8 * 7 * 6/(3!) = 56 ]

Note the identity of $\binom{x}{y} = \binom{x}{x - y}$, and so this could also be written as

[ \binom{n + k - 1}{n - 1} ]

Conceptually, this makes sense: you can either think of it as combinations of 8 positions, taken 3 at a time for stars, or taken 5 at a time for bars.

Negative binomial distribution

A random variable $X$ is distributed negative binomial if it counts the number of Bernoulli trials (with probability $p$) that it takes to get exactly $k$ failures before the $r$th success on the final trial. Its probability mass function can be written as

[ \Pr(X = k) = \binom{r + k - 1}{k}(1 - p)^k p^r ]

The probabilities after the combinations term are clear: $k$ failures with $(1 - p)$ probability and $r$ successes with $p$ probability. And the combinations term is exactly the same as the stars and bars formula! Walking through it:

- We have to get through exactly $k$ failures and $(r - 1)$ successes before hitting the $r$th success on the final trial.

- Out of those $(r + k - 1)$ trials, we want to count combinations taken $k$ failures at a time, or taken $(r - 1)$ successes at a time.

- So look at that! Exactly the same as stars and bars. Thus, we have:

[ \binom{r + k - 1}{k} = \binom{r + k - 1}{r - 1} ]

Overall, multisets are all about putting $k$ indistinguishable objects/balls/whatever (like the 3 dice) into $n$ distinguishable bins (the 6 values)—and multiple balls can occupy one bin. This beautifully matches the negative binomial setup as well, with the derivation above as the cleanest explanation. But if it helps, as a final check, to map this exactly to balls and bins, you are basically placing $k$ indistinguishable failures into $r$ success “bins.” There are exactly $r$ success bins—essentially like “inter-success intervals”—because

- the 1st bin contains any failures from 0 successes to the 1st success,

- the 2nd bin contains any failures from the 1st success to the 2nd success,

- and so on through $r$, where the Bernoulli trials stop.

-

pp. 19–20 in the first edition (Random House, 1968). The novel was recently reissued by New York Review Books in March 2026. ↩︎